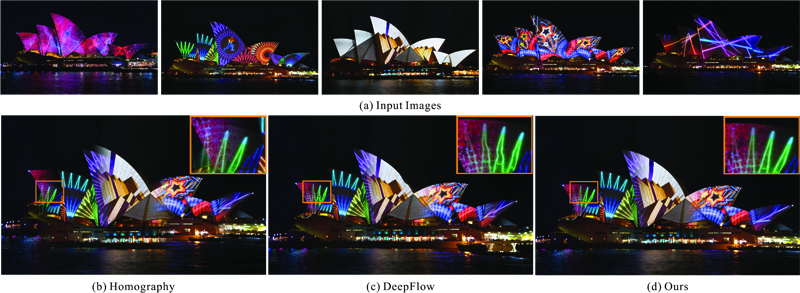

Fig. 1: Our regional foremost matching for Internet images estimates accurate regional correspondence and enables several applications.

Xiaoyong Shen Xin Tao Chao Zhou Hongyun Gao Jiaya Jia

The Chinese Univeristy of Hong Kong

|

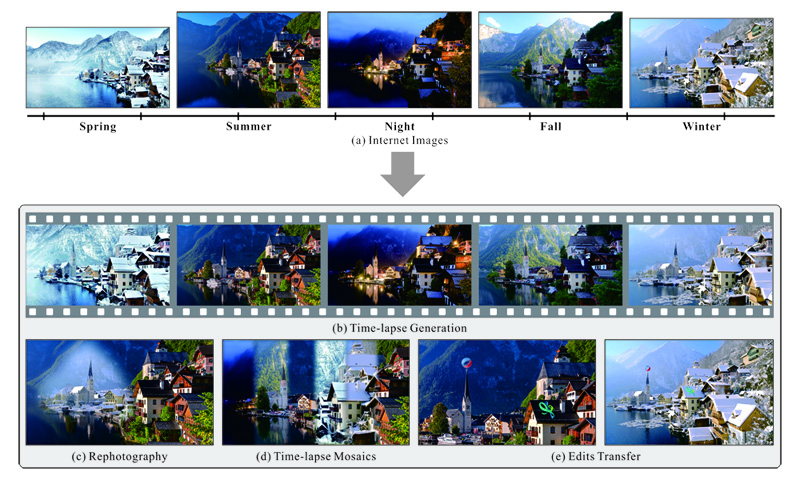

Fig. 1: Our regional foremost matching for Internet images estimates accurate regional correspondence and enables several applications. |

Abstract

We analyze the dense matching problem for Internet scene images based on the fact that commonly only part of images can be matched due to the variation of view angle, motion, objects, etc. We thus propose regional foremost matching to reject outlier matching points while still producing dense high-quality correspondence in the remaining foremost regions. Our system initializes sparse correspondence, propagates matching with model fitting and optimization, and detects foremost regions robustly. We apply our method to several applications, including time-lapse sequence generation, Internet photo composition, automatic image morphing, and automatic rephotography.

Downloads

|

"Regional Foremost Matching for Internet Scene Images" Xiaoyong Shen, Xin Tao, Chao Zhou, Hongyun Gao, Jiaya Jia ACM Transactions on Graphics (ToG) - Proceedings of ACM SIGGRAPH Asia, 2016 |

Our Method

|

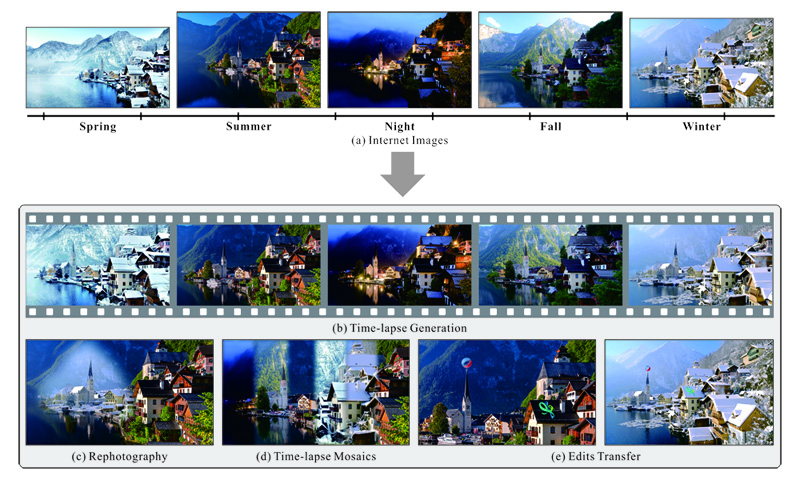

Fig. 2: Comparisons of state-of-the-art matching methods. (a) and (b) are the reference and input images, respectively. (c-h) are the warping results of (b) according to the correspondence to (a) by different methods. (i) shows our regional foremost warping result and (j) is the color-coded correspondence. |

|

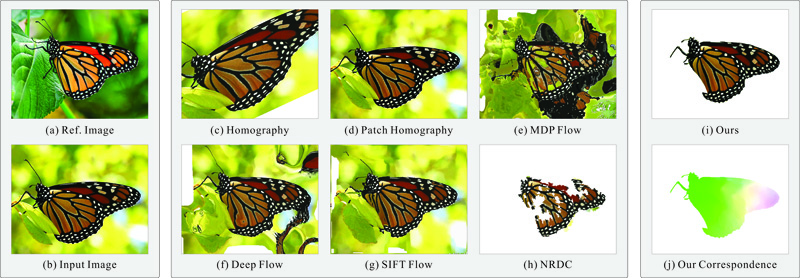

Fig. 3: Illustration of our framework. (a) Two input images. (b) Our initial correspondence. (c) Dense correspondence propagated from (b) (color encoded for visualization). (d) Refined correspondence based on (c). (e)-(f) Consistency and similarity confidence. (g) Final regional foremost correspondence. (h) Output foremost matching region. |

Our Results

|

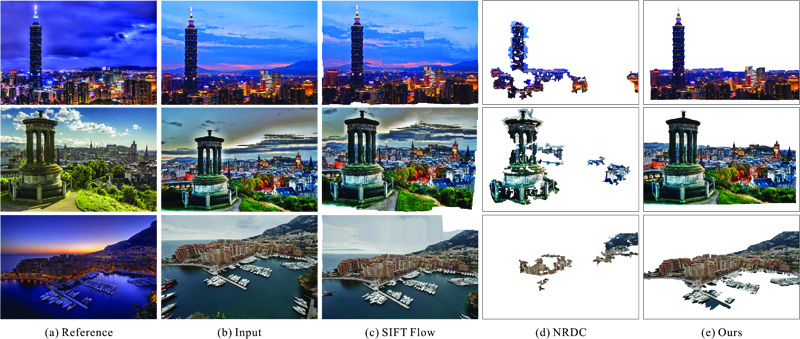

Fig. 4: Visual comparison with other methods. |

|

Fig. 5: Visual comparison with other methods on the data from NRDC Real World Scenes. |

Applications

|

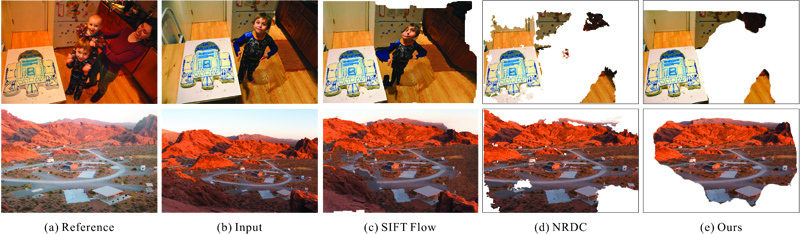

Fig. 6: An example of time-lapse sequence generation from Internet scene images. |

|

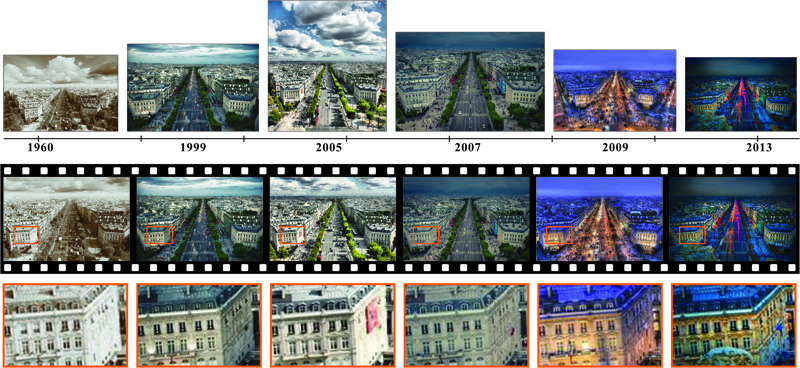

Fig. 7: Unaligned digital photomontage. In this example, we match and warp images regarding a reference as shown in (a) and (b) respectively and then compose objects in (c) using the digital interactive photomontage method. |

|

Fig. 8: Unaligned image fusion for time-lapse mosaics. (a) shows input images. (b) and (c) are the fusion results of global matching and DeepFlow estimation respectively. (d) is our ghost-free fusion result. |

|

|

|

|

| (a) Source Image | (c) Target Image | (d) Result of Yucer et al. | (e) Ours |

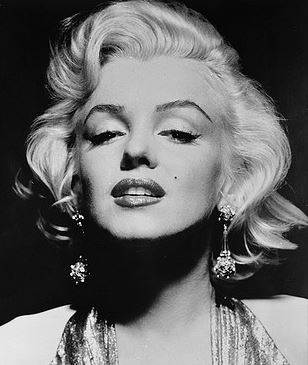

Fig. 9: Example of image editing. (a) and (b) are the source and target images respectively. (c) is the result of Yucer et al. and (d) is our result.

|

|

|

|

|

|

| (a) Input #1 | (b) Input #2 | (c) Our Result |

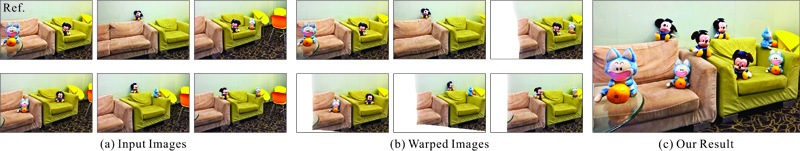

Fig. 10: Automatic image morphing. (a) and (b) are two input images. (c) are the results of our method.

Reference

[1] BROX, T. AND MALIK, J. 2011. Large displacement optical flow: descriptor matching in variational motion estimation. TPAMI 33, 3, 500-513.

[2] LIU, C., YUAN, J, AND TORRALBA, A. 2011. Sift flow: Dense correspondence across scenes and its applications. TPAMI 33, 5, 978–994.

[3] HACOHEN, Y., SHECHTMAN, E., GOLDMAN, D. B., AND LISCHINSKI, D. 2011. Non-rigid dense correspondence with applications for image enhancement. TOG 30, 4, 70.

[4] XU, L., JIA, J., AND MATSUSHITA, Y. 2012. Motion detail preserving optical flow estimation. TPAMI 34, 9, 1744–1757.

[5] WEINZAEPFEL, P., REVAUD, J., HARCHAOUI, Z., AND SCHMID, C. 2013. DeepFlow: Large displacement optical flow with deep matching. In ICCV, 1385–1392.

[6] REVAUD, J., WEINZAEPFEL, P., HARCHAOUI, Z., AND SCHMID, C. 2013. DeepMatching: Hierarchical Deformable Dense Matching. IJCV 120, 3, 300–323.